A human brain holds, on average, one lifetime of knowledge, and then it dies. Every technique, every story, every map of the territory accumulated inside it — the name of the plant that heals, the angle of the spear throw, the face of the ancestor — goes with it. Evolution gave us language as a partial fix: knowledge that can be spoken can outlast the speaker, if someone else hears it and repeats it. Oral tradition is the first external memory system. It is also the most fragile: dependent on faithful transmission, distorted by each relay, bounded by the range of a voice and the attention of a listener.

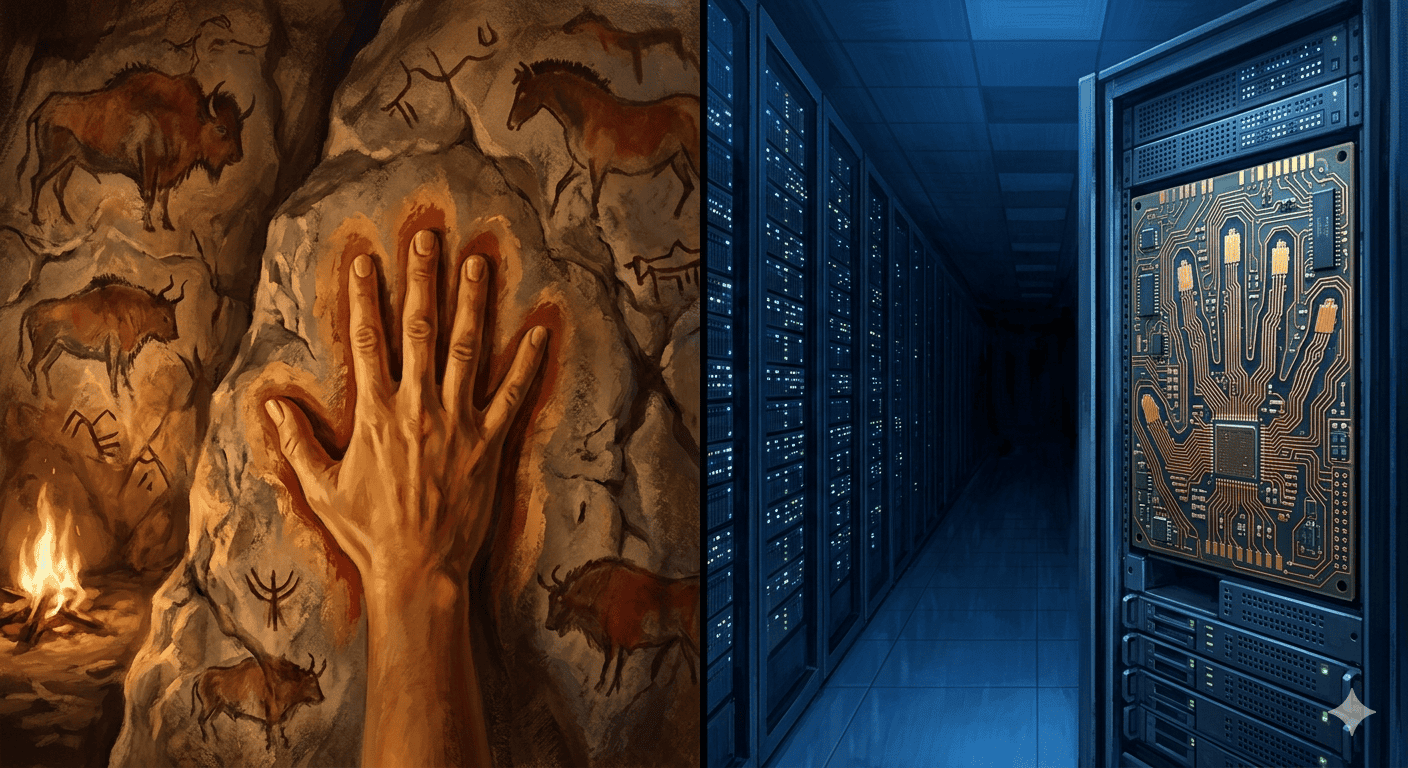

The hand pressed against the cave wall at Altamira, around thirty-six thousand years ago, changed the terms of the problem. The animal painted on the rock does not require a narrator. The mark persists without a human present to carry it. This is the founding gesture of every technology that follows — and every technology we have called “communication” or “information” since then is not a new invention but the same gesture at a different scale.

I. The problem that never changed

The cave paintings at Altamira (~36,000 BCE) and Lascaux (~17,000 BCE) were not art in the modern sense — decoration, self-expression, aesthetic pleasure. They were the first attempt to decouple knowledge from the body entirely. Whether they recorded migrations of animals, ritual knowledge, or territorial claims, the operational insight was the same: if you put it on the wall, you don’t have to be there to transmit it. The wall outlasts the painter.

Oral tradition predates this by hundreds of thousands of years. Its transmission fidelity is high in the short run — a story told the same way ten thousand times develops a kind of crystalline stability, the epic and the prayer and the genealogy functioning as error-correcting codes. But it degrades over distances and generations. You need a chain of trustworthy narrators, each capable of perfect recall, with no breaks. The chain breaks. The knowledge is lost. Writing solved this by eliminating the chain entirely.

II. Four eras, one project

Storage — preservation. Cuneiform on clay tablets (~3400 BCE), hieroglyphics on stone, ink on papyrus and leather — writing solved the relay problem of oral tradition at a stroke. A written text transmits with zero loss across the relay: the scribe does not need to understand what he copies. More importantly, writing allows a person to speak to someone not yet born. The library is the natural sequel: a building whose function is to serve as the external brain of a civilization. The librarian is the first information professional — the person whose job is not to know things but to know where things are.

Organization — structure. Once the volume of written knowledge exceeds what any single mind can survey, the problem shifts from preservation to retrieval. Melvil Dewey and his decimal system in 1876; card catalogues; and eventually, Edgar Codd’s relational database model (1970) — the indexed table, the schema, the query. The database architect is the librarian’s successor: not concerned with the content of the knowledge but with the structure that makes it findable. Both are solving the same problem as the cave painter, one step downstream.

Retrieval — access. The Internet connects the libraries. The Web makes them navigable without physical presence. Search engines — PageRank’s key insight being that the importance of a document can be inferred from what other documents link to it — automate the librarian’s referral function at planetary scale. But the burden remains on the human: you must know what to ask. The search engine returns the book. You still have to read it.

Synthesis — interaction. This is where large language models enter. The paradigm shift is not in the quantity of knowledge accessible but in the interface. A search engine is a pointer: it tells you where the answer might be. An LLM is a compression: it has internalized the relationships between all the documents in its training corpus into a high-dimensional mathematical space, and it can navigate that space in natural language. You no longer retrieve the book. You ask the space what the book would say.

III. What the attention mechanism actually is

The transformer architecture — Vaswani et al., Attention Is All You Need, 2017 — solved the core limitation of its predecessors. Recurrent neural networks processed sequences token by token, accumulating a hidden state that degraded over long inputs — the model’s memory of early context faded as the sequence lengthened, in a way uncannily analogous to the transmission losses of oral tradition. The attention mechanism discards sequential processing entirely. Every token in a sequence can attend directly to every other token, with learned weights determining relevance. The model can look at any word in the document and ask: given where I am now, which of the other words in this text matters most to predicting the next one? This is, functionally, what a skilled human reader does — not reading left-to-right with equal attention, but weighting, jumping, cross-referencing. The librarian’s focus, formalized in mathematics.

IV. Why the surprise, and why the disappointment, are both correct

A transformer trained on the text of human civilization can write poetry, debug code, explain quantum mechanics, and negotiate tone with a subtlety that feels like empathy. This is genuinely extraordinary, and the people who shrug at it have not looked carefully.

The disappointment is also warranted. LLMs do not know anything in the way a person knows. They have no model of the world independent of the text they were trained on; they have no persistent memory across conversations; they confabulate with the same syntactic fluency they use to report established facts; and they have no stake in being right. They are engines of probability, not engines of truth. The mistake — which the industry makes constantly and the public makes understandably — is to attribute sentience to linguistic fluency. The interface is so human (language) that we project human qualities onto it.

But as the historical progression makes clear, an LLM is the culmination of the storage and retrieval project, not the beginning of the understanding project. It is the most sophisticated external brain we have built. It is not an internal brain at all. The sense that it doesn’t really know anything is correct — and it is exactly what you would predict if you understood what stage of the forty-thousand-year project we have actually reached. Vannevar Bush, writing in 1945 about his imagined Memex — a machine for associative memory retrieval, the conceptual ancestor of both hypertext and the attention mechanism — grasped the goal more clearly than many who work with LLMs today: the aim was never to replicate thought, but to extend reach.

The disappointment comes from expecting the wrong thing. We have built something that can retrieve and recombine the recorded output of human civilization at a speed and scale no previous technology approached. That is already remarkable. We have not built something that understands. Whether that is next, or whether it is a different project entirely, is a question neither the history nor the architecture settles.

V. “It is written”

Paul Simon, 1964: the words of the prophets are written on the subway walls / and tenement halls. Simon’s image is an inversion of the sacred — wisdom that once came from the mountain now appears on the walls of the underground, anonymous, spray-painted, available to anyone who passes through. The externalization is complete: the prophet doesn’t need to be present; the message is on the wall; the wall will outlast the prophet.

Borges imagined The Library of Babel — a library containing all possible books, infinite and navigable by no one. The Internet and the LLM are its partial, practical realization: not all possible books, but most actual ones, navigable by anyone with a browser. The difference between the two is the compression. Borges’s library was unsearchable because it lacked structure. Ours is searchable because we spent five thousand years building the structures — the catalogue, the index, the link, the embedding — that let us find what we need inside it.

The progression from Lascaux to the transformer is the same gesture, scaled. From the animal painted on the cave wall to the entire written output of civilization compressed into a vector space, available to anyone with a browser. We have not created a new mind. We have built a more sophisticated way to hold the minds of everyone who came before us.

The words of the prophets are written — in 32-bit floating-point numbers, distributed across data centers on three continents, returned to you in natural language, with a small probability of hallucination. The medium has changed. The project is the same.

Further reading

- Ashwin Vaswani et al., Attention Is All You Need (2017)

- Edgar Codd, A Relational Model of Data for Large Shared Data Banks (1970)

- Vannevar Bush, As We May Think (1945, The Atlantic)

- Jorge Luis Borges, The Library of Babel, in Ficciones (1944)

- Marshall McLuhan, Understanding Media (1964)

- Paul Simon, The Sound of Silence (1964)