I. The parable

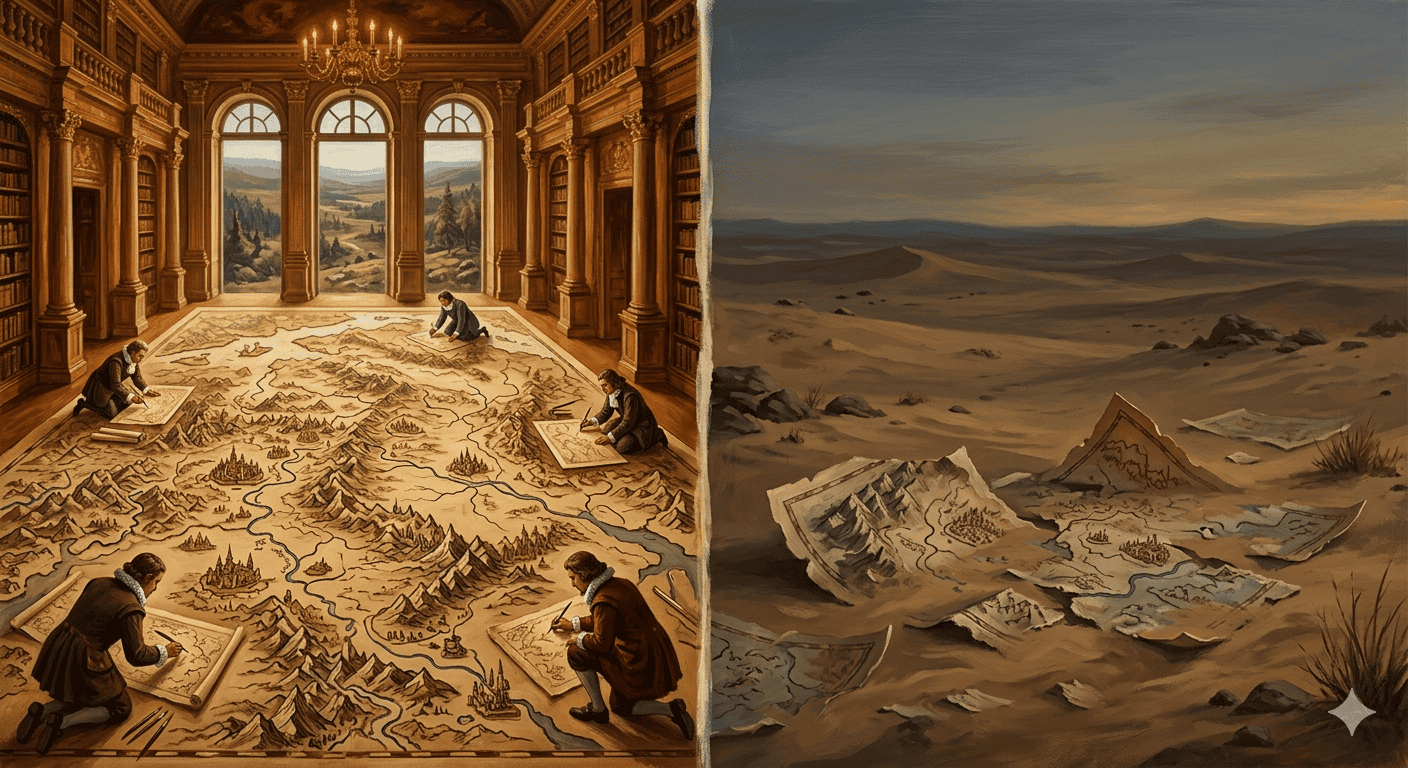

In 1946, Jorge Luis Borges published a six-sentence parable. He attributed it to a fictional traveler — Suárez Miranda — and buried it in El Hacedor, a collection his admirers would later call Dreamtigers. The parable describes an empire whose cartographers, unsatisfied with every previous map, built one at the only scale that could not lie: one province to one province, point for point. The map was complete. It was also useless. Subsequent generations, with more practical priorities, let it decay in the western deserts.

Borges called it Del rigor en la ciencia — “On Exactitude in Science.” He was writing about epistemology, about the hubris of perfect representation. He was also, without knowing it, writing the prologue to a paper that would not exist for another seventy-one years.

II. How we got the map — backprop, attention, scale

In 2017, eight researchers at Google published Attention Is All You Need. It was not, on its face, a dramatic paper. It proposed a new architecture for sequence modeling. But three things converged, and the convergence was the event.

The first was backpropagation. In 1986, Hinton, Rumelhart, and Williams gave neural networks their engine: a method for tuning millions of parameters by measuring how wrong a prediction was and propagating that error backward through the network, adjusting each weight in proportion to its contribution to the mistake. Without backprop, there is no deep learning. For two decades after 1986, it produced classifiers and voice recognizers. It did not produce language.

The second was the sequential bottleneck. Language models before 2017 read text one word at a time, each prediction waiting for the last. This was not just slow. The vanishing gradient meant the network forgot the beginning of a sentence before reaching the end. More importantly, the serial architecture made it impossible to use modern GPU farms: thousands of parallel processors sat idle while the model waited for word n−1 before beginning word n. Training on the internet would have taken centuries.

The Vaswani paper removed the bottleneck. Self-attention let the model look at every word in a sentence simultaneously — computing, in a single pass, how strongly each word relates to every other. The canonical example: “The animal didn’t cross the street because it was too tired.” Which does it refer to? Self-attention links it directly to animal, without recurrence, by weighting every pair of tokens in the sequence against each other. The computation is embarrassingly parallel. You can run it across ten thousand GPU cores at once.

Once the parallelization ceiling was gone, a third force became operative: the scaling hypothesis. Rich Sutton’s The Bitter Lesson (2019) and Kaplan et al.’s Scaling Laws for Neural Language Models (2020) made the same empirical observation from different directions: the most reliable lever for improving a language model is scale — more parameters, more data, more compute. Almost nothing else matters as much. Common Crawl, Wikipedia, Reddit, every digitized book, every arxiv preprint. Feed it all in. The map kept growing.

The genius was not any single paper. It was the realization that attention enabled scaling, and scaling produced the map.

III. What the map actually is

At the end of training, a large language model is a statistical compression of the entire digitized written record of the human species. Not a metaphor. The parameters are, literally, the relations between every concept ever written down, weighted by frequency of co-occurrence, strength of mutual prediction, stability of association across billions of tokens of text. The model contains the form of every argument, the cadence of every register, the canonical objections to every position. It contains what fluent humans tend to say about the world.

This is the 1:1 map. Possibly denser.

It does not contain, and is not constructed to contain, the world those fluent humans were writing about. A model that has absorbed every climatology paper has the map of climatology discourse — the vocabulary, the frameworks, the canonical debates, the typical errors. It does not have the climate. A model that has read every legal opinion has the map of legal reasoning; it does not have the case in front of it. A model that has read every account of grief has the map of grief; it has not grieved.

This is not a deficiency. It is a description of what the artifact is.

IV. The category error eating the conversation

The category error eating the public conversation about AI is treating fluency about the world as knowledge of the world.

The distinction has always existed. What is new is its scale. When a human being conflates what they have read about a subject with the subject itself, it is a familiar and correctable mistake — Alfred Korzybski’s “the map is not the territory,” the student who has absorbed the secondary literature without encountering the primary phenomenon. When a machine can produce, on demand, arbitrarily fluent discourse on any subject, and when that fluency is good enough to pass for expertise in most low-stakes contexts, the confusion becomes systemic.

The danger is not that LLMs are wrong. They are, sometimes, and that problem is largely mechanical — better retrieval, better grounding, better verification. The danger is that they are persuasive enough to make us forget to check the territory. Every benchmark passed, every capability added, every new paper on emergent reasoning: the map keeps getting more detailed. What gets harder to remember is that none of that movement happens in the direction of the territory. It happens in the direction of a more complete map.

There is a name for what happens when a map becomes detailed enough that it starts to overwrite the territory in the minds of its users. Borges had it in 1946. He called it the desert.

The right relationship to the map — the one Borges prescribed by implication, by letting the map decay into the sand — is not suspicion. A queryable compression of everything humans have ever written down is a real cultural achievement: the first artifact in history that gives us back our own collective discourse in navigable form. The right relationship is orientation. The map is a tool for moving through known terrain faster. The moment you stop checking whether the map agrees with what you see through the windshield, the map is no longer navigation. It is fiction.

And the places where the map and the territory most sharply diverge — the questions the model answers with unusual fluency but unusual sameness — are precisely where the world we have not yet written about lives.

Those gaps are where everything actually interesting still happens.

Further reading

- Jorge Luis Borges — Dreamtigers (El Hacedor) (1960)

- Vaswani et al. — Attention Is All You Need (2017)

- Hinton, Rumelhart & Williams — Learning Representations by Back-Propagating Errors (1986)

- Kaplan et al. — Scaling Laws for Neural Language Models (2020)

- Rich Sutton — The Bitter Lesson (2019)

- Alfred Korzybski — Science and Sanity (1933)