Updated 2026-05-11 — Added Édith Piaf closing reference (v1.1).

Here is the fantasy in its most seductive form: you wake up in your twenty-two-year-old body with everything you know now. Every mistake you’ve made, every silence that should have been words, every door you walked through and every door you didn’t — all of it available as hindsight. What would you change?

I ran this exercise on my own life. Seriously, not rhetorically. I picked moments — the ones that still have weight, the ones that show up in the three-in-the-morning inventory. And each time I tried to intervene, I discovered the same thing: the moment I wanted to fix was not self-contained. The person I became was introduced by someone I only met because of a party I almost didn’t attend because of an argument that happened because of the decision I now want to undo. The love that shaped me most was downstream of a failure I would have prevented. The work I am proudest of came from a rejection that, at the time, felt definitive.

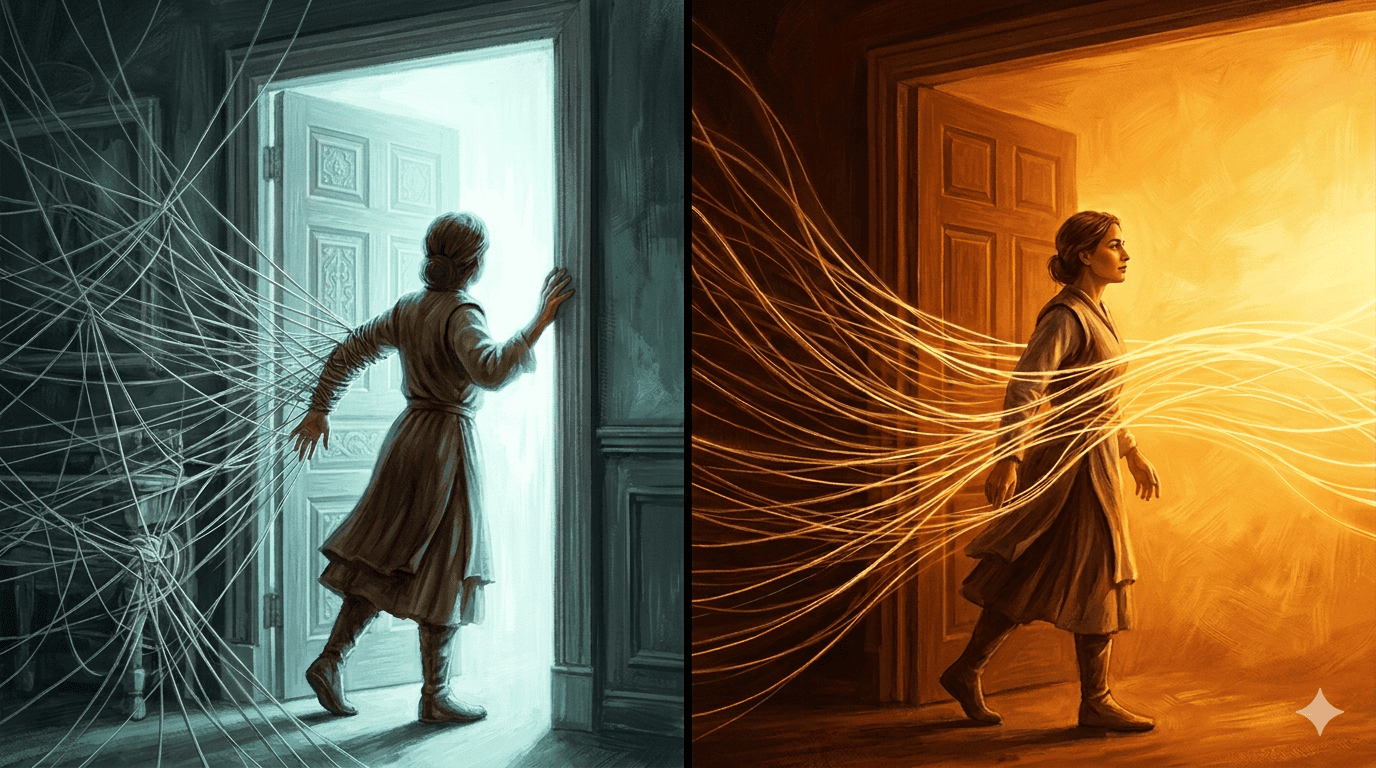

Tug one thread and the whole weave shifts. I couldn’t find a single inflection point I could intervene at without losing something I would not trade. This is not consolation. It is structure.

I. The experiment.

The problem with the fantasy is not that it’s impossible. Set aside the physics. The problem is that it rests on a false premise: that you know what the past was.

You don’t. You know what you observed of it — which is something considerably smaller. Memory is not a recording. It is a reconstruction, performed in the present, shaped by everything that happened between the event and the recall. The you that remembers the argument is not the you that had it. The account has been edited by grief, perspective, sleep, and the hundred subsequent experiences that retroactively reframed what the argument meant.

This is not a failure of memory. This is what memory is.

II. Shannon: the signal is not the thing.

Claude Shannon’s A Mathematical Theory of Communication (1948) established something simple and devastating: every transmission involves loss. Every channel has finite capacity. Every signal arrives at the receiver as a degraded version of what was sent — compressed, partially corrupted, shorn of information that existed in the original but did not survive transmission.

Your memory of the past is a received signal. The original — the actual event, in its full dimensionality — included the internal state of every other person in the room, the hormonal landscape of your own body, the thousand prior moments that loaded the context, the economic and atmospheric conditions that framed the hour. None of that was encoded. You received a lossy first-person compression of an n-dimensional event, and you have been working from that compression ever since.

Shannon defined entropy as the measure of uncertainty in a system. The past, as a system, has enormous entropy — not because it was random, but because the information required to describe it completely is orders of magnitude larger than what any single observer could receive. When you propose to “go back and fix it,” you are proposing to solve an equation where you know, at best, a few of the variables. The missing variables are not footnotes. They are the system.

Edward Lorenz demonstrated in 1963 what this means in practice: in a complex system, tiny perturbations in initial conditions produce radically different trajectories. The butterfly effect is not a metaphor. It is a mathematical property of nonlinear systems, which includes every human life. The intervention you imagined as surgical — a different word here, a different decision there — would have propagated through the system in ways no hindsight can model, because hindsight is working from the compressed signal, not the original state.

Gregory Bateson defined information as “a difference that makes a difference.” Your memory of the past is a record of differences that registered — the ones that crossed the threshold of attention and encoding. Everything below that threshold is gone. You cannot replay what was never recorded.

III. Von Neumann: the program running on old hardware.

John von Neumann’s work on self-reproducing automata offers a different frame. A program, run in a new environment, produces different outputs even with identical instructions — because the environment is part of the computation. The “you” that would return to the past is a program compiled in 2026 attempting to execute on 1994 hardware, in a 1994 environment, interacting with 1994 inputs it cannot fully predict. The program may not compile. The architecture it assumes may not exist.

Von Neumann also worked on iterative optimization — the idea that complex solutions emerge not from getting it right on the first pass but from successive approximation. This is the architecture of everything from cellular biology to neural networks. Stochastic gradient descent — the standard method by which machine learning systems improve — does not jump to the optimal solution. It takes a small step in the direction of reduced error, re-evaluates, and steps again. The process requires failure. An algorithm that could jump back to any prior state and start over would never converge; it would spend its entire budget exploring rather than refining.

The convergent system is not the one that avoids errors. It is the one that uses errors as signal.

IV. Reincarnation, read through information theory.

The theological framing of reincarnation holds that a soul returns to learn what it failed to learn. The information-theoretic framing is structurally identical but strips the supernatural machinery.

If consciousness is a pattern of information — and there is no compelling alternative on offer — then the pattern that encodes learning survives better than the pattern that does not. What the various traditions call karma is the weight initialization of the next run: the unresolved questions, the unintegrated losses, the half-completed computations that the prior iteration left open. Each life — or each moment, or each generation — is a fresh initialization with some inherited parameters. Not a clean start. Not a replay. A new forward pass from a prior accumulated state.

Shannon and Von Neumann did not write about reincarnation. Shannon was notoriously private about metaphysics; Von Neumann, a pragmatic Catholic convert on his deathbed, was skeptical of anything he couldn’t formalize. I am not putting words in their mouths. I am noting that their frameworks describe exactly the architecture the older traditions were pointing at: a system that encodes, loses, learns, and iterates; that cannot return to a prior state without destroying the information accumulated since; that converges not by rewinding but by continuing.

David Deutsch argued that a time-travel intervention doesn’t repair a timeline — it generates a new one. The past you wanted to fix remains fixed in its branch. The intervention creates a branch where you exist and the original failure does not, but neither of those things is your life. Your life is the path from here forward.

V. (Coda) Regret, and what it’s pointing at.

Regret is the subjective experience of believing that a different input would have produced a better output — while lacking access to the full model. It is epistemically understandable. It is practically useless in the direction it points.

The exercise I ran — find me the moment I could change without losing what I would not trade — failed in an instructive way. The failures were load-bearing. Not because suffering is good, but because information is dense and interconnected, and the same thread that feels like the wrong choice is often what connected the next several things. The structure of the life you have is not a consolation prize for the life you didn’t. It is the only dataset you have, and it took everything that happened to generate it.

Regret points backward. Iteration points forward. They are the same computation — the recognition that a different input would have produced a different output — run in different directions. The coherent move is to keep running it forward: take the error as signal, adjust the weights, step again.

This essay was worked out in conversation with an AI assistant called Claude. Whether Claude is named after Claude Shannon, Anthropic has not confirmed. It is a plausible and fitting tribute: Shannon’s information theory is the mathematical foundation of everything a large language model does — every token predicted from a compressed representation of prior context, every response a forward pass through weights shaped by accumulated error. The uncertainty about the naming is itself a small demonstration of the argument. We don’t know the full state. We work from the signal we received. We run forward from there.

Édith Piaf settled this long before information theory. Non, je ne regrette rien is not a claim that nothing went wrong. It is a forward declaration: the ledger is closed — not because the losses don’t count, but because the accounting is done and the next step is already in motion.

There is no going back. There is only the next step in a gradient that has been descending since before you were initialized.

Further reading

- Claude Shannon & Warren Weaver — The Mathematical Theory of Communication (1949)

- David Deutsch — The Fabric of Reality (1997)

- Gregory Bateson — Steps to an Ecology of Mind (1972)

- James Gleick — The Information (2011)